Criticality Analysis - Part II: Practical implementation

The first part described Asset Criticality Assessment (ACA) as a foundation of Asset Management. It answered questions about the purpose of conducting it, the areas where it can be applied, and the value it delivers.

>> Criticality Analysis - Part I: The foundation of rational decision-making in Asset Management <<

In the second part, you will find a practical process for implementing Asset Criticality Assessment. It is a compilation of many projects of this type that we have delivered and serves as a starting point for new initiatives. This does not mean, however, that it always looks the same. Asset Criticality Assessment is intended to create value for YOUR organization, therefore adapting both the implementation steps and the analytical elements is a natural consequence of that objective.

Elements of Asset Criticality Assessment

Before moving to the description of the implementation process itself, let us briefly reflect on the elements of Asset Criticality Assessment.

- Asset Structure / Technical Asset Hierarchy – a complete listing of functional locations (understood as logical objects) and equipment (understood as physical objects) that are subject to the criticality assessment.

- Analysis Model – often referred to as the Criticality Ranking Model, meaning a defined set of criteria, their parameters, values, and descriptions. The Analysis Model is an organization-specific tool that explains why particular assets can be treated as high-, medium-, or low-critical. When building the model, it is common to define example ratings for representative technical objects.

- Detailed Assessments – the individual results of the criticality assessment performed for technical objects. Obtaining detailed assessments is the most labor-intensive part of ACA.

- Aggregation Model – a set of rules used by the organization to determine the final criticality level of an asset. The Aggregation Model contains all mathematical conversion rules (points, weights, ranges) as well as logical rules that ultimately lead to the final criticality level.

- Asset Criticality – the final criticality level of an asset resulting from the detailed assessments and the application of the selected Aggregation Model. Asset Criticality may take the form of classes (e.g., A, B, C, D) or be expressed on a linear scale. In the vast majority of organizations, one single criticality level is defined. However, in some cases, ACA produces two final values.

In addition to the key outputs created during ACA, a supporting tool is used in the background. This may be a customized Excel sheet, a CMMS/EAM system, an APM (Asset Performance Management) system, or a tool dedicated specifically to Asset Criticality Assessment.

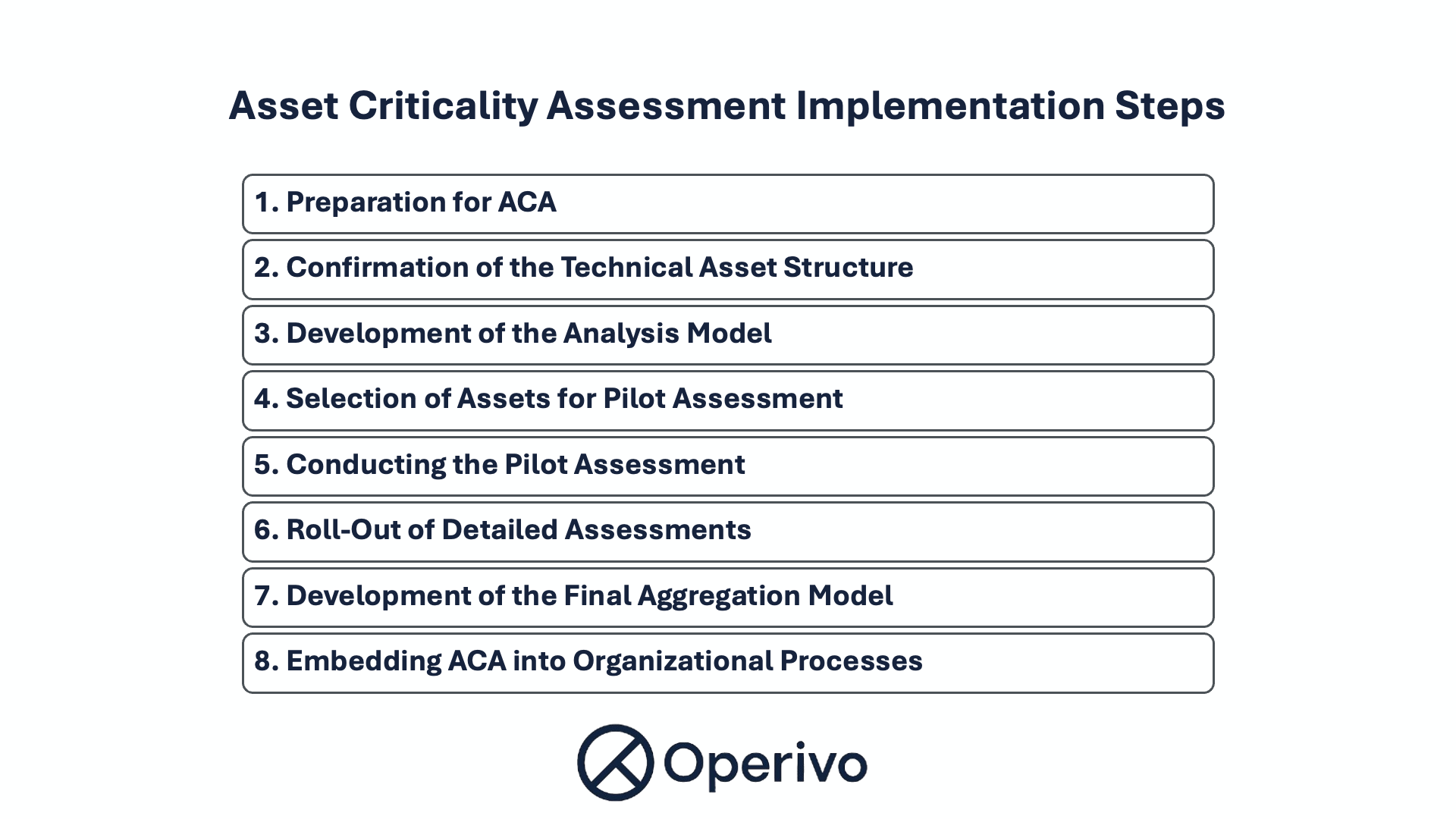

Asset Criticality Assessment Implementation Steps

Having defined the elements of ACA, we can now move to the process of developing them. At Operivo, the Asset Criticality Assessment process is structured as follows:

1. Preparation for ACA

This phase includes the formal aspects of the project:

- defining the objectives of ACA, its assumptions, and the business processes in which it will be used,

- appointing the project team,

- selecting or developing the ACA tool,

- conducting the project kick-off meeting.

2. Confirmation of the Technical Asset Structure and Other Required Data

The asset structure is a fundamental element of the assessment. If it is outdated or if the hierarchy has not been developed in a consistent way, a significant portion of the time during the detailed assessment phase will be spent correcting the structure, which is inefficient.

In one recent project, after revising the structure and identifying significant inconsistencies, we proceeded with detailed assessments at the client’s request. Instead of conducting evaluations efficiently, the teams continuously pointed out additional assets that should have been included in the assessment. After completing the first round of detailed assessments, the project had to be extended by two additional phases: structural corrections and re-assessment.

If one of the initial assumptions of ACA is the use of additional data, such as failure history or spare parts consumption history, their availability and reliability should also be confirmed at this stage.

3. Development of the Analysis Model (Criticality Ranking Model)

The Analysis Model is developed together with the client’s project team, which should include representatives of all stakeholders whose work will be affected by the ACA results. Typically, these include Production, Maintenance, HSE, Fire Protection, Quality, Engineering/Investments, Environment, and Logistics.

The set of criteria and parameters is selected by answering the fundamental question: “What makes a particular technical asset critical?”

As a result of workshops, the outcome is usually a list of 4–7 criteria represented by 7–11 parameters. Most often, each parameter is defined on three levels of significance (A, B, C). They may also take a linear (numerical) or binary (Yes/No) form.

The Analysis Model is considered ready for further use when the perspective of each stakeholder is represented and when the qualification of an asset into a specific criticality class within each criterion is clear and unambiguous.

4. Selection of Assets for Pilot Assessment and Definition of Expected Criticality Levels

This step is not always implemented, but we recommend considering it, as it reduces the risk of later corrections to the Analysis Model once detailed assessments have already been performed for a significant group of assets.

One of the fundamental assumptions of ACA is to identify approximately 5–15% of assets whose importance to the organization is the highest according to the objective criteria defined in the Analysis Model. An additional important aspect is to avoid a situation in which the ACA results significantly differ from the organization’s existing understanding of asset criticality. For this purpose, a pilot assessment is conducted on a limited sample of technical assets. To identify potential discrepancies between obtained results and existing perceptions, the expected criticality level of selected assets is defined in advance.

The recommended pilot sample should include 40-100 assets from different technical areas or systems. The expected distribution should typically assume 5-15% at the highest criticality level, 20-40% at the lowest level, and the remainder at intermediate levels.

It is worth remembering that the result of ACA is not only awareness of which assets are the most critical, but also which assets are associated with the lowest risk. Knowing which equipment is least critical can be highly valuable when seeking time and cost savings.

5. Conducting the Pilot Assessment and development of the Initial Aggregation Model

The objective of the pilot assessment is to verify whether each criterion and parameter “works,” meaning that it creates visible differentiation between assets.

To conduct the pilot assessment, it is necessary to have a list of selected assets with predefined expected ratings (these must be defined before the assessment begins) as well as the Analysis Model.

At this stage, detailed assessments are performed only for the limited pilot group. Based on the results, the first version of the Aggregation Model is developed.

At this point, adjustments to the Analysis Model may be required. The success criterion is that each criterion produces differentiation and that the Aggregation Model allows the organization to obtain final criticality results consistent with the expected distribution.

6. Roll-Out of Detailed Assessments Across the Entire Asset Base

If the ACA project is implemented in a large organization by multiple teams, training becomes necessary, especially for individuals who were not involved in the earlier stages of methodology development. Training should also include planning the meeting structure, work rhythm, and reporting.

The recommended approach during roll-out is to collect detailed assessments for each criterion without displaying the final Asset Criticality score. There are two main reasons for this:

- First, visibility of the final score may influence assessors to adjust ratings upward or downward.

- Second, the final score may change later during the refinement of the Aggregation Model.

If detailed assessments are intended to be based on data available in IT systems, roll-out means implementing the developed rules within those systems and generating results in an automated way, minimizing human bias.

During the detailed assessment phase, it is advisable to plan quality assurance mechanisms to ensure consistency and reliability of results. If multiple teams (for example, in four different locations) assess similar types of assets, cross-verification of approaches is recommended.

7. Development of the Final Aggregation Model

The final version of the Aggregation Model is developed after at least 80% of detailed assessments have been completed.

The Aggregation Model may include mechanisms such as:

- converting detailed ratings into point values (e.g., A = 5, B = 3, C = 1),

- assigning weights to specific criteria (e.g., HSE = 2; Production impact = 1.5; Reputation impact = 0.5),

- converting linear and binary criteria into classes and points,

- defining calculation logic (sum, multiplication, average, maximum value),

- translating point totals into final classes using defined ranges, and in some cases introducing logical conditions (e.g., if HSE rating = A, the final criticality cannot be lower than B regardless of total points).

It is common practice to develop several Aggregation Model variants and compare the resulting distributions before selecting the one best aligned with organizational needs.

The success criterion at this stage is obtaining final criticality results and achieving stakeholder acceptance.

Leaving ACA results in an Excel sheet - or worse, in a printed binder - will most likely result in them not being used after the project ends. It is good practice to define at the beginning where the results will be embedded (e.g., CMMS/EAM) and to import them after the Aggregation Model has been finalized and approved. It should also be remembered that Asset Criticality may change over time, and every new asset added to the structure must be assessed accordingly.

8. Embedding ACA into Organizational Processes

If you want to avoid a situation where ACA becomes a document pulled out only for audit purposes, you must embed it into organizational processes.

Typical integration includes:

- defining a formal periodic review process for ACA,

- incorporating Asset Criticality into processes such as capital replacement prioritization, spare parts criticality analysis, work prioritization, and budgeting,

- using Asset Criticality in initiatives such as preventive maintenance optimization (PMO), modernization projects, and Root Cause Failure Analysis (RCFA).

Institutionalizing ACA is the only way to achieve real, long-term value from the project.

Summary

At first glance, the full ACA implementation process may appear complex. In reality, most assessments include selected elements rather than the entire framework. The process and its components must not be more complex than the organization’s ability to maintain them. Much depends on the size of the organization and expectations toward the assessment itself.

If this topic is relevant to your organization and you would like to discuss specific challenges or applications of Asset Criticality Assessment, feel free to reach out.

Read more:

Related services:

Operivo Sp. z o.o.

Aleja Jana Pawła II 27

00-867 Warsaw

+48 533 373 200

europe@operivo.com

Copyright @ Operivo 2025

Privacy Policy